Contents

MNM tutorial

The package MnM is a set modules extending the Max/MSP environment. It is part of the FTM library developed by the Real-Time Applications Team & Performing Arts Technology Research Team at Ircam. MnM stands for “Music is not Mapping“. This means that the interface will help the user (musician, dancer…) to interact with sound samples in a more complex way than a one-to-one relationship. A common mapping design would consist in setting up a univocal and simple correlation between gestures components and sound parameters. For instance, an upward motion of the arm will trigger a pitch increase… With the package MnM, the close integration of motion capture with complex statistical models and sound analysis/re-synthesis within in an environment such as Max/MSP offers new possibilities of interactions.

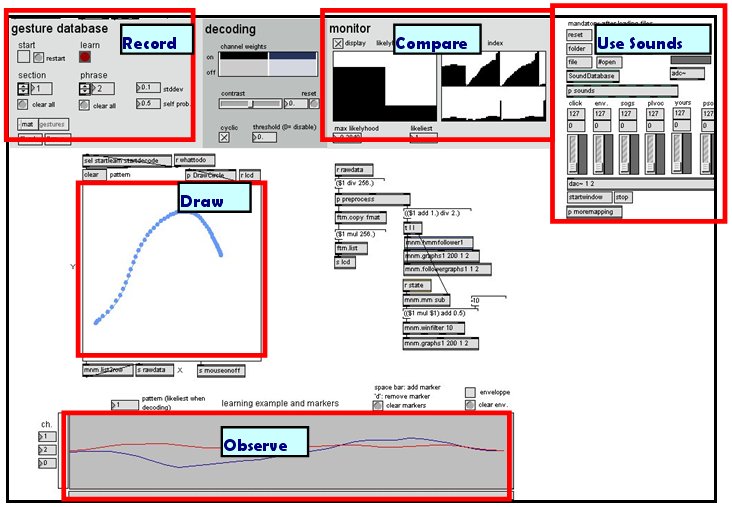

Workspace : overview

Get an overview of the interface functions.

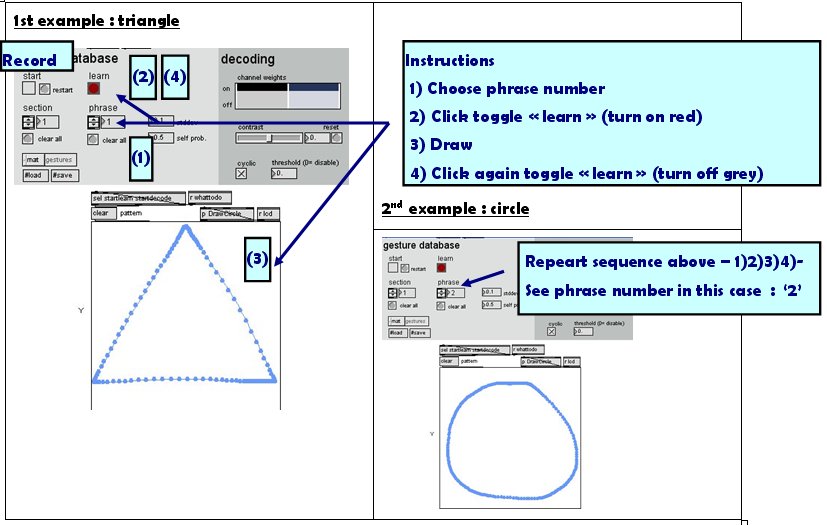

1st STEP : Record gestures

Let’s start with two simple drawings : a triangle and a circle.

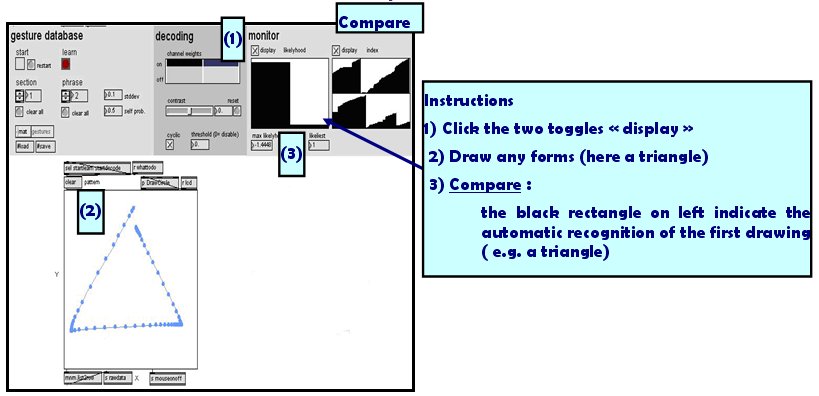

2nd STEP : Compare

Draw a figure and then see how similar it is with your two referent drawings.

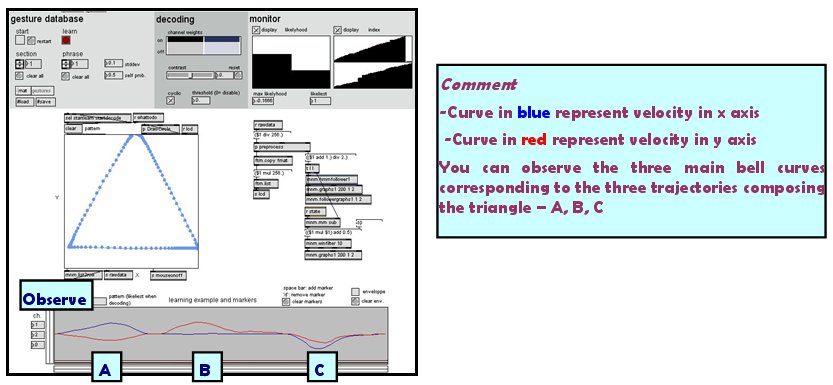

3rd STEP : Observe

Pay attention to the curves below. They represent the velocity in X and Y axis of the mouse trajectories. That give a useful temporal information on how you realize your drawing.

Connection Avec EyesWeb XMI

EyesWeb XMI, the open platform for real-time analysis of multimodal interaction, can be connected to Max/Msp throughout the OSC protocol (Open Sound Control). OSC is open, message-based protocol which was originally developed for communication between computers and sythesizers (cf. wiki).